Digital computing is being limited by the explosion of huge data sets and the demand for higher-performing technologies—for example, in machine learning and artificial neural networks. Memristor crossbar arrays could be used to accelerate computations in such applications by programming them with stable analog values and performing matrix operations directly in-memory.

In their communication in Advanced Materials, Prof. Joshua Yang and Prof. Qiangfei Xia from the University of Massachusetts, Amherst, along with Dr. John Paul Strachan from Hewlett Packard Labs, present a memristor platform for analog computations and forecast a device performance greater than 100 trillion operations per second per watt—at least 16 times greater than purely digital solutions.

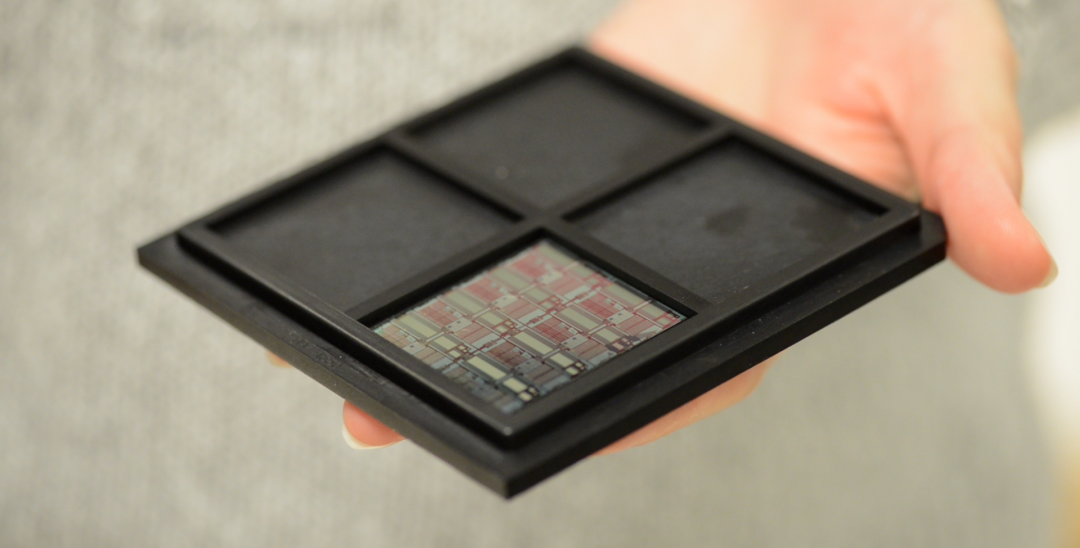

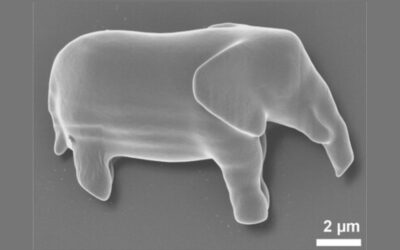

The memristor crossbar arrays are fabricated with hafnium oxide, and the circuit structure enables individual access to the memristor cells during programming. One-transistor, one-memristor cells containing the fabricated memristors achieve stable conductance levels with 6 bits of precision.

A single-layer softmax neural network was programmed, and facilitated the demonstration of handwriting recognition to an overall accuracy of 89.9%. A variety of input images can be applied to the system, which are unrolled into a vector and applied to the memristor crossbars. The resulting output vector yields the classification results. For some individual cases, the experimental system even outperforms digitally trained software.

Forecasted energy efficiency was calculated from the energy efficiency of a 128 × 64 memristor array, which performs tens of thousands of operations in a single step, and leads to a theoretical computational efficiency of 115 trillion operations per second per watt.

This study provides a baseline for future analog computing systems that could find use in portable computing devices operating at lower power.

To find out more about memristor crossbar arrays for analog computation, please visit the Advanced Materials homepage.