After 50 years as a chemistry research professor at the University of Toronto, the thrill of materials discovery by tried-and-true human intelligence and experiential learning has never waned and continues unabated. By happenchance, however, I now find myself working alongside colleagues equally interested and excited by materials discovery made by blue-sky artificial intelligence and machine learning.

Curious about what the future holds for the synthetic chemistry professor and his co-workers, I imagined how prof-bots and co-bots in self-driving chemistry laboratories could replace and render them obsolete (see my recent article Autonomous chemical synthesis).

This made me wonder whether a prof-bot could supplant my other role as a teaching professor, working with teaching assistants in the traditional lecture theater of learning. I am not talking here about the massive open online course known as the MOOC, where one replaces the so-called “sage on the stage” by a “scholar on a screen.” Rather, I am conjecturing whether the wide-ranging tasks and the vast repertoire of skills of the university teaching professor are replaceable by a robotic equivalent that is better able to educate students than a human educator.

I imagine this is an unthinkable thought and an unacceptable scenario for most of my professorial colleagues. They know the importance of the professor/student human interaction and its personal touch when it comes to the art and science of teaching.

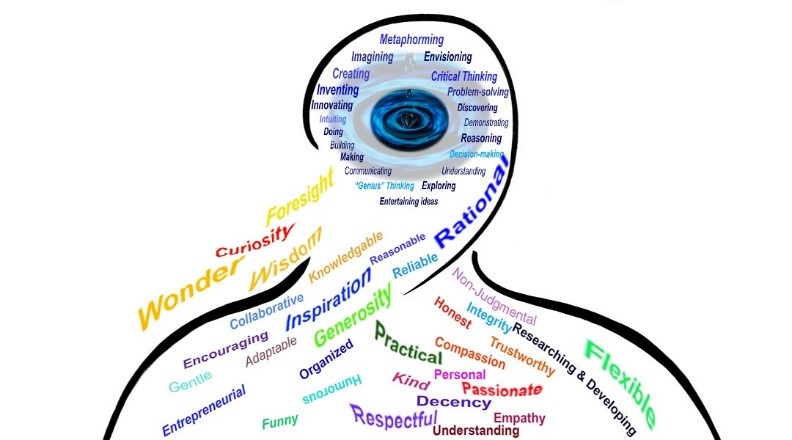

Teaching is an inherently human endeavor requiring higher-order education traits than simply knowledge transfer. It requires pervasive qualities that include and transcend the skills of communication, judgement, empathy, passion, understanding, foresight, insight, reason, respect, generosity, rationality, humor, motivation, inspiration, organization, reliability, encouragement, non-judgemental, adaptation, resonance, synergy, oversight, relationships, objectivity, curiosity, honesty, problem solving, critical and independent thinking, innovative ideas, creative solutions, and thirst for knowledge, to name but a few.

Excellent teachers understand this evidence-based truth: The ability to learn — or inability — determines our health, happiness, self-worth, and success. The act of learning and applying what we learn as wisely and productively as possible, is an act of creation. Will this truth hold true for the best of our creative AI, VI, and machine learning systems, as well? How many of these outstanding characteristics will these advanced systems have, given that they may eventually have the capacity to realize human/machine potential in unimaginable ways? That is one open-ended question.

These are exceptionally challenging talents for an autonomous teaching professor to acquire beyond the purview of simple knowledge transfer. Recall my last article on autonomous chemistry laboratories in which I imagined synthetic chemists as we know them today could become obsolete, as referred to above.

I would argue that it is not beyond the realm of possibility that with supercomputer power, big data, artificial intelligence, and machine learning, all-knowledge-endowed robot educators and robot assistants could rise to match or even outperform professors and teaching assistants in the theater of higher learning.

It is conceivable that all of the concepts and principle of chemistry that have been recorded in textbooks and the open literature could be read and analyzed by a learning machine and coded to find the best possible approach for a humanoid professor to educate undergraduate and graduate students in the classroom. I could similarly envision developments in artificial intelligence and machine learning that would enable the robot professor to create computer-based assignments and examinations and complete the marking tasks on time.

This is not a far-fetched proposal. Last year, the first intelligent robot, the brainchild of digital teaching pioneer Professor Jürgen Handke, was trialed delivering lectures at the Philips University of Marburg in Germany. Named Yuki, the knowledgeable and approachable humanoid functioned well as co-bot assisting the professor with lectures and tests, evaluating the academic performance of the students and determining the kind of support they needed. Similar developments are underway in other countries.

I imagine this is just the tip of the iceberg as to whether Yuki will remain just a teaching assistant or with artificial intelligence-based self-learning will expand and enhance its teaching prowess to the point where it can replace the professor in his or her traditional role as teacher. Whether university professors would end up believing this inhuman state of university education would improve the student learning experience is another question that I leave to professional chemistry educators.

Keep in mind, however, there are other cost-benefit counter-forces at work in this potentially disruptive emerging educational technology that realize an autonomous chemistry professor works 24-7, has lower running costs, no need for an office, easily replaced, immune to illness, no family problems, requires no coffee and lunch breaks, and never asks for a vacation.

What is next for Prof-Bots?

20 years from today we may be wondering how it was possible to research and teach chemistry without Prof-Bots and Co-Bots! Considering the daunting abundance of new knowledge created and shared at a constant dizzying pace through labyrinths of supercomputers conversing with “their own kind” of gnarly networks, all-human faculty members and students would never have been able to manage the continuum of complexity we are now experiencing.

In fact, it would be more difficult without the aid of Prof-Bots and Co-Bots working tirelessly to teach us not just the rudiments of chemistry but how best to apply those concepts and principles to manage such vexing climate change phenomena as greenhouse gas emissions, permafrost methane escape, and ozone depletion. Human “learning systems” would be throwing in the towel — or waving the white flag of surrender — to the unbearable global climate we have created. On the other hand, we would be burying our heads in the sand just hoping and praying that our selfless, life-saving innovations could do the impossible on our behalf: save the human race from the physical and mental limits of its own ingenuity. Somehow, we gave ourselves a second chance at surviving in the inhospitable cosmos. Moreover, we human beings are simply grateful for their essential aid and relief.

Be that as it may, we sense there are tremendous trade-offs for this aid. Arguably, none is greater than the indescribable touch of humanity: What Dow Chemical Company called “The Human Element” in June 2006, when they boldly launched their advertising campaign heralding their commitment to tap human potential in solving global problems. In this regard, they recognized the uniquely humanistic qualities we are aiming to imbue or impart to our world of robots now serving our every need and every aspect of our lives — and without which, we could not survive, much less thrive. When you ponder the importance of teaching S.T.E.M. & S.T.E.A.M. knowledge and practices — in preparing learners for working in chemistry — how many schools serving high school and university will need to integrate AI, machine learning, and VI systems to their instructional technology programs? And not just for their chemistry courses! How pressed will our learning organizations become trying to engage young learners at all cost — if only to ensure they remain competitive nationally and internationally?

When we think about that word “competitive”, other real human concerns pop up. Welcome to the New Age of Real-Life (and true-to-life) “Sibling Rivalry,” so to speak — rivalry among virtually intelligent human/machine systems. Unlike our “younger”, heavily programmed and regulated AI closed systems, the new and improved virtually intelligent open systems will self-learn in ways that surprise even the most knowledgeable computer programmers and engineers. Will these advanced, self-learning “systems” become as fiercely competitive as humans do? Perhaps the manufacturers of Prof-Bots felt it was a great idea to offer learning organizations a wide range of personalities to prepare students for real-world human and machine interactions.

This point raises yet another basic question concerning the evolution of our vision for education and the machine enhanced tools and operatives we will need. If one could count the number of schools in sovereign nations that are currently experimenting with and adopting AI and virtually intelligent systems, this would give us some idea of how fast the projected adoption will happen; and, more to the point, how fast the robots will be teaching us. Out of necessity.

So one immediate task is to understand the various ways these virtually intelligent and machine learning learning systems are being used. Are they primarily being applied to the chemical, physical, biological, and engineering sciences and mathematics, like Wolfram MathWorld, which was built by Stephen Wolfram, the British-American computer scientist, theoretical physicist, and entrepreneur? Or are they being envisioned and engineered with much broader plans and deeper applications for serving businesses, industry, and learning organizations world-wide?

Ultimately, Prof-Bots can bridge academia and everyday learning, engaging students at an early age to learn how to best self-learn. In doing so, they will be simultaneously preparing minds for a lifetime of innovating and problem solving.

We hope the Prof-Bots will teach us something profound about our humanity — and not just grow our technology profoundly. They will certainly possess the means to help us see ourselves more clearly and deeply: like looking in a plain mirror and correcting our mistakes by seeing the mistakes as they are occurring or catch sight of them before they occur. Consequently, we can learn to use the mirror to discover new possibilities of thinking and innovating.

Finally, we must always keep in the forefront of our imagination how these Prof-Bots do not have to resemble humans or act like humans in order to teach us — or teach themselves. They can be as non-anthropomorphic as a Samsung refrigerator, a table, or a Tesla car with machine learning and virtual intelligence capabilities. In fact, as we experience daily with the bounty of practical, virtually intelligent interactive tools, they can take any form or size imaginable.

In short: these teaching appliances might think like gods, but will look like amoebas! Never mind beautiful sentient “Sophia” and “Ester” — or the bipedal Herculean robot, “Atlas” — the Prof-Bots can look as mundane as a house fixture and yet think as brilliantly and beautifully as Einstein … or not.

Written by: Geoffrey Ozin1 and Todd Siler2

1Solar Fuels Group, University of Toronto, Toronto, Ontario, Canada, Email: [email protected], Web sites: www.nanowizard.info, www.solarfuels.utoronto.ca, www.artnanoinnovations.com.

2ArtScience, Denver, Colorado, USA, Email: [email protected], Web Site: www.toddsilerart.com/home.