A long-standing dream in the semiconductor industry is to construct a brain-like computing system on silicon chips. Recently, neuromorphic computing has been proposed as a means of emulating the working modes of neurons and synapses on hardware, and has been hailed as the next generation computing paradigm for the era of big data and artificial intelligence.

However, a key challenge for building a neuromorphic computing system is recreating content-based memory structures found in the brain, which are dramatically different from the address-based storage in classical computers.

In a recent paper published in Advanced Intelligent Systems, Yuchao Yang and colleagues at Peking University have shown that such human-like memory structures can be constructed using memristors, which is acknowledged as the fourth passive circuit element besides resistors, capacitors and inductors.

Due to their internal working dynamics, memristors can change their resistance values in response to external electrical stimulation, bearing similarities with biological synapses. In their study, the team have purposed and simulated a memristor-based physical system using discrete attractor networks capable of implementing associative memory, a typical content-based memory phenomenon that can remember the relationship between seemingly unrelated items or recall the whole information precisely from damaged information.

The desired information is encoded at attractors of the network, and through introducing the competition and cooperation among neurons in an online learning method called Oja Rule, the storage capacity of the system can be increased by 10 times compared to previous methods and has better robustness and tolerance for device imperfections.

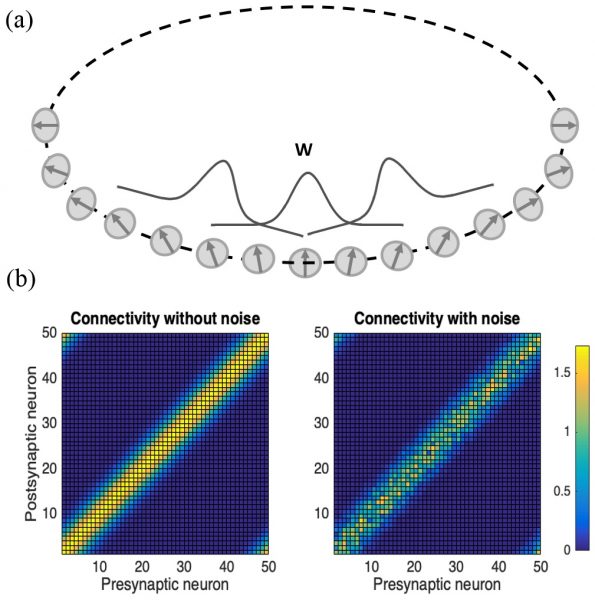

By extending the discrete attractor neural network to continuous attractor neural network (CANN), working memory based on memristors was made possible for the first time, which demonstrates the potential of dynamically storing and tracking external stimuli. The researchers also systematically investigated the influence of device characteristics on network performance and found that noise from different sources can have different impacts the ability of CANN in maintaining dynamic information. While read noise shifts the center of network activity, write noise can make the center of network activity split.

This work represents a significant advance in memristor-based neuromorphic systems that can approach biologically plausible neural networks and could pave the way for truly intelligent hardware systems. Looking into the future, the team hopes to combine the continuous attractor neural networks with existing supervised learning systems on physical memristor crossbars.

Research article available at: Y. Wang, et al. Advanced Intelligent Systems, 2020, doi.org/10.1002/aisy.202000001

Kindly contributed by the authors