Human-machine interfaces are becoming more efficient, smaller, easier to use, and more exciting every day. The touch-screen of a smartphone, including its icons, images, and menus, allows the layman to master a complex device in a matter of hours.

Human-machine interfaces for intention recognition and sensory feedback.

However, in the field of assistive robotics, for instance in prosthetics, fast co-adaptation of human and machine is not yet common. Biocompatible sensors need to be physically and stably connected to a user’s body, and the related electronics and computational power must be wearable and unobtrusive. Most importantly, the user’s intent must be detected and reliably turned into control commands for the prosthesis in real time. This calls for human-machine interaction strategies that lead to a continuously improving life-long symbiosis.

The ideal interface simultaneously interprets a user’s desires and provides feedback through sensory substitution, which might quickly lead to changes in the perceived self and its presumably underlying sense of body ownership and agency. Therefore, human-machine interfaces are effective tools for the neuroscientist and the experimental psychologist, providing the possibility to study adaptive processes between humans and machines over long periods of time in order to shed new light on the plasticity of the human brain and body.

In this WIREs Cognitive Science review, Prof. Philipp Beckerle, Dr. Claudio Castellini and Prof. Bigna Lenggenhager use a multi-disciplinary approach at the cross-section of psychology, robotics, and artificial intelligence to sketch potential steps toward the ideal bidirectional human-machine interface.

While wearable haptics, robot skins, high-density sensors and stimulators quickly develop, interfaces that process and handle information to foster co-adaptation of humans and machines are still far from real-world application.

The current study analyzes the interplay between human cognition and interface performance: How could the human and the machine work together more tightly, mutually adapt to each other, and lead to the feeling of a hand prosthesis as one’s own hand instead of as a tool?

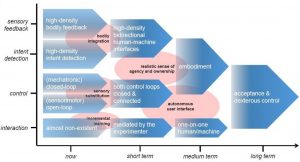

The research roadmap suggested to understand and develop bidirectional human–machine interfaces that enable robotic experiments, which advance the investigation embodiment and embodied cognition.

The team proposes a research roadmap with short-, medium-, and long-term goals to finally overcome the current limitations of human-machine interfacing systems and simultaneously improve our understanding of human body-related cognition.

It is well known for instance, that one never forgets how to swim, ski, or ride a bike. What are the requirements for a bidirectional human-machine interface to achieve the same effect? Can we, at least in principle, enforce embodiment in a prosthetic arm? How far can we go in altering one’s sense of ownership and agency in the world? How important and useful would this be?

The authors identify four subfields of robotics and psychology in which it is necessary to develop new technologies as well as adaptive interaction techniques to achieve acceptance of rehabilitation and assistive devices (still very low at least in upper-limb prosthetics) in the future.

While important endpoints, along the way exciting new possibilities will arise from this research to create a deeper understanding of human cognition.

“We are facing various crucial challenges to interconnect between research areas in the near future. The best thing about it is that our results might generalize to a wider range of applications, which would benefit from intuitive control, e.g., industrial, medical, and rescue applications,” says Prof. Beckerle.