Picture this: after months, possibly years of work, you and your colleagues decide to submit what you consider to be your most prized, original, and adventurous research contributions to an elite journal only to be told that it is not sufficiently innovative, significant, and technologically relevant. You and your colleague’s work is curiously dismissed as being “not novel enough”, “not a sufficient conceptual advance”, or “not of broad enough interest” to even make it past the editor’s desk for peer review.

This experience is all-too common to modern-day academics. Yet, these important works are somehow scientifically novel, important, and intriguing enough to qualify for peer-review in Tier 2 “sister” journals.

What constitutes novelty and importance? There are many examples of highly novel ideas that are unimportant. Conversely some very incremental advances are themselves highly important. Moreover, while novelty can be perhaps judged at a specific point in time, importance cannot.

Something very strange seems to be happening in the reputable science publishing houses. Seriously bold, exploratory work that aims to expand, enrich, and excite a field of scientific research rather than timorously adventure into incrementalism, are being pushed down to related business enterprises that are under the auspices of Tier 1 publishing groups.

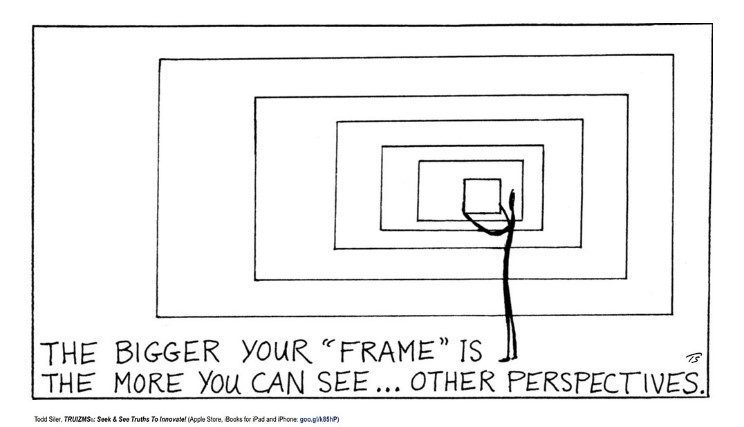

Ask yourself what advanced exploratory work constitutes “visionary” or “breakthrough” or “boundary-breaking” ambitions, and which deserve rigorous peer evaluation by Tier 1 science publishers? Why are so many outstanding papers by distinguished minds, intent on challenging the prevailing view of the establishment, swept aside or cast into the “interesting work” yet “not sure it’s novel enough” pile?

How many times have you heard from an editor of an elite journal that there is overwhelming demand for the limited page space in the journal and 95% of all submissions never make it out of the starting gate?

Do you get the impression editors of these highest impact journals are drowning in their capacity to search for the next big thing buried in the crushing number of “breakthrough” research papers submitted for publication?

Global scientific output roughly doubles every nine years, and this paper tsunami, which can only get worse, poses an especially serious challenge for editors of leading journals acting as gatekeepers for which submissions to choose to send out for peer review. A proper evaluation of whether a paper meets the criteria of a “conceptual advance, beyond state-of-the-art, a radically new way of thinking, a technologically disruptive development”, must be extremely challenging for an editor simply because of the sheer volume of “frontier” work in disparate areas crossing their desks.

Also concerning is how this situation creates the perfect storm for gender biases — both conscious and unconscious — to play out, with female researchers being less likely than their male counterparts to use the term, “novel”, or other flattering language to describe their work and the diversity-innovation paradox in science.

Did you ever wonder whether the Matthew Effect of accrued benefit, expressed as “the rich get richer and poor get poorer” is intentionally or unintentionally influencing selection criteria at the peer review gate.

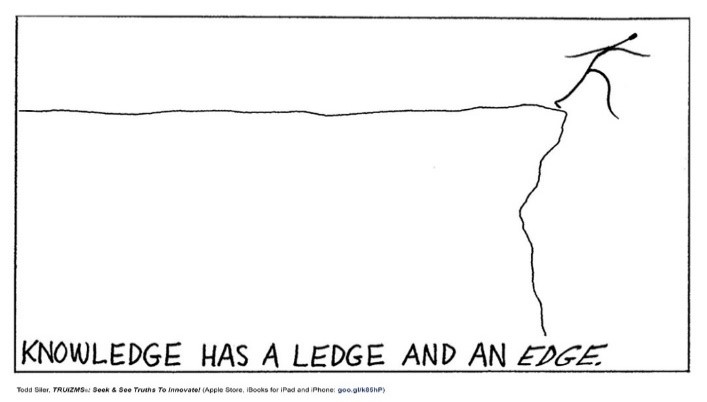

Maybe we need to rethink how and where we showcase our breakthroughs? Does it really have to be the highest impact factor journals? Is the motivation salary, promotion, and awards? Do the university ranking organizations need to rethink their models and criteria? Does our affair with the h-factor give a true value of creativity and innovation or does it merely relate to the ability to write a classical review article or an opinion piece or strategically publishing just your very best?

Particularly affected by the current system are young scientists with the stress of the paper tsunami on the one hand and the demands of the university ranking organizations on the other. Sometimes they are not receiving the acknowledgement, tenure, and funding decisions they deserve because their papers do not find a home in the respected journals by which the university ranking organizations judge scientists. It equally challenges young investigators and students that are trying to understand this incredibly complicated moving target.

In a highly competitive educational business environment, more and more students (national and international) migrate to a university based on its global ranking. Is it time to rethink our values in higher education and place real science and social creativity as the key criteria in this sector rather than profit and loss?

Besides, for whom are we really doing the work, them or us? Are we not doing research for the joy of discovery, the exhilaration of unveiling the next big thing, the satisfaction of transforming an idea to a technology or social value, and the excitement of creating and sharing knowledge for the benefit of all? Does it really matter where great work ends up published? Even if the work does not make it to the elite journals, if it is great, there is a good chance it will receive the recognition it deserves by those in your field who matter — there are some interesting examples of extremely highly cited papers in obscure journals.

The system may have worked at one time, but now it seems that we need to look at whether it is serving the interests of any of the three stakeholders, namely, the scientists and innovators of the scientific community, the publishers and editors, and the university organizations.

A possible solution to the problem is for the three stakeholders to come together to work out a better and more effective solution to improve how the scholarly research of scientists and innovators is appraised, which will ultimately benefit society while giving a fair compensation to the publishers and meeting the legitimate requirements of funding agencies, professional societies and universities.

Now, the COVID-19 crisis has, for the first time in recent history, granted academics an opportunity to slow down their engines and look beyond the mass publishing frenzy. Rather than adamantly trying to maintain the previous pace of our research in the midst of a global pandemic, we academics might instead use this time to reflect and rethink the current systems under which we operate.

Should the impact of our research to society be limited by the judgement of elite journals? Should h-index and impact factor trump mentorship and community outreach in tenure evaluations? Will the traditional measures of excellence within academia even be relevant to future generations? Our potential for creativity, innovation, and societal contribution need not be limited by the bar set by elite journals, nor by outdated metrics defined by the Ivory Tower of the past.

Written by: Geoffrey Ozin[1] and Todd Siler[2,3]

[1]Solar Fuels Group, University of Toronto, Toronto, Ontario, Canada, Email: [email protected], Web sites: www.nanowizard.info, www.solarfuels.utoronto.ca, www.artnanoinnovations.com. [2]ArtScience Productions, Denver, Colorado, USA, Email: [email protected], Web Site: www.toddsilerart.com/home.

Postscript

Many colleagues who also recognize the urgency of finding ways to enhance the methods by which the products of their scholarly work are appraised have provided views that have been incorporated into this opinion editorial. Some of these are included below in an effort to improve current practices.

Back in the old days when quality work was published in highly specialized journals facing small communities, or low-impact-factor journals, authors were not aiming for high-impact-factor journals even if they had conceived something novel and thought-provoking, or discovered something truly extraordinary.

In fact, eight examples of breakthrough scientific research were rejected by top rank journals and went on to win the Nobel Prize. The loss for the community is not the lost opportunity for great works to be published in high impact journals, but the chances are, great works are going to specialized journals with much smaller reader exposure. They will miss the opportunity of being read, appreciated and further studied and applied by a large number of researchers.

Elite journals are attracting more and more attention from researchers and other journals are getting much less exposure, another manifestation of the Matthew Effect.

Useful reference works on this subject are quoted below.

“Our analyses show that underrepresented groups produce higher rates of scientific novelty. However, their novel contributions are devalued and discounted: For example, novel contributions by gender and racial minorities are taken up by other scholars at lower rates than novel contributions by gender and racial majorities, and equally impactful contributions of gender and racial minorities are less likely to result in successful scientific careers than for majority groups.”