Quantum computers are fundamentally different from their classical counterparts, with the potential to solve many problems much faster than even the world’s fastest supercomputers can.

But a fundamental question within the field has been whether quantum computers can overcome the current limitations of standard machine learning algorithms, such as memory and computational resource overloads.

Machine learning is a data analysis technique that, in a sense, mimics the processes of human intelligence as the software learns from data, identifies patterns, and makes decisions automatically. Nowadays, its applications range from domotic systems to autonomous cars, from face and voice recognition to medical diagnostics.

Quantum computers and machine learning

One of the fields where machine learning may be very efficient is big data processing, where a typical problem is classification — that is, breaking data down into separate categories.

In these types of problems, it is convenient to first achieve a geometric representation of the data. For example, if there are only two classes of objects located on a plane, using machine learning, the objects can be arranged in such a way that they are separated by a line where all points sitting on either side of the line are assigned to one class. If the objects are defined in a higher dimensional space, the line is replaced by a plane, or its multi-dimensional counterpart known as a hyperplane.

However, data is not necessarily represented geometrically and its distribution in the original set can be quite erratic, making the division into classes not obvious and hard to implement. In these cases, it is useful to first map (i.e., embed) the data into a higher-dimensional space where the separator is easier to find and draw.

In a 2020 study, scientists proposed a specific embedding technique that maps the original data into a special kind of high-dimensional space using a quantum computer. The space, known as a Hilbert space, is used in quantum mechanics to describe all possible states of a quantum system, and the corresponding map is called the quantum embedding.

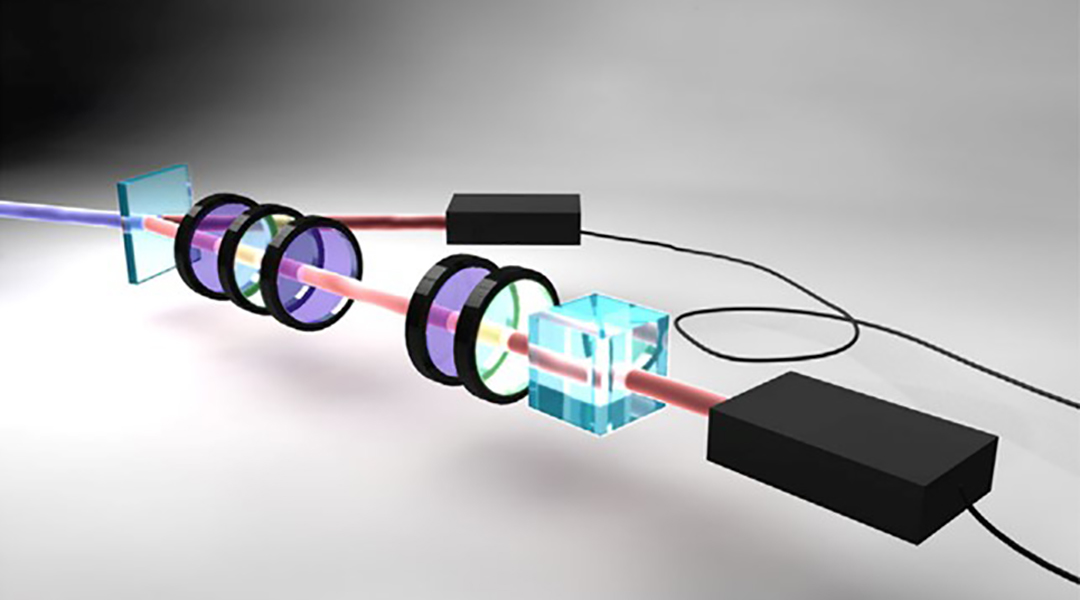

To test these theoretical considerations, a team of physicists led by Filippo Caruso, professor at the European Laboratory for Nonlinear Spectroscopy (LENS) and the Department of Physics and Astronomy at Florence University, designed and tested a quantum embedding protocol on two different engineered experimental quantum platforms and compared the results with what they achieved using a cloud-available quantum processor provided by the Rigetti computing company.

“Quantum computers are not just more powerful versions of current computers,” said Ilaria Gianani, one of the study’s authors from the Science Department of Roma Tre University. “They interpret information in radically different ways, and this difference is what allows them to ‘see’ patterns that a standard machine could not. This study is aiming to test and compare experimentally a protocol embedding classical data into quantum data, where the latter could be processed more efficiently and quickly.”

Quantum data is much more convenient to process with machine learning than the original classical data given the possibility of exploiting superposition states or quantum parallelism, and properties such as entanglement, that do not exist in the classical domain. But in the new study, even the first step in solving the classification problem, namely the mapping of classical objects into a larger space was done using a quantum technique.

“The embedding of classical data into quantum data happens via a sequence of quantum operations (also called quantum gates) depending on some free parameters that allow to ‘learn’ how to represent any classical data and possibly classify them in a higher dimensional space by means of more efficient and feasible linear separators,” explained Lorenzo Buffoni, another of the study’s authors.

Quantum embedding put to the test

The application of quantum embedding was carried out in two steps: first, the physicists determined the optimal parameters to achieve better quantum embedding, simplifying the data classification problem. This step was done offline on a classical computer as it was a relatively easy numerical optimization.

Next, the circuit was implemented on quantum machines to explore how efficient the latter are in using the theoretically proposed method in reality. This two-step scheme is called hybrid quantum computing.

The quantum platforms that the researchers tested were based on different physical realizations of quantum bits or qubits — the quantum version of bits — quantum optics, ultracold atoms, and superconducting qubits. The study showed that all the architectures indeed gave satisfactory results in realizing quantum embedding.

The fact that all three quantum systems based on very different physical principles gave very good results shows promise for future systems based on hybrid architectures with specialized hardware for storage, processing, and distribution of quantum data.

“The most significant outcome of our study is to further highlight the fact that hybrid quantum technologies might represent the future to solve real-world problems, hence exploiting the different features and advantages of complementary and versatile physics platforms together with real cloud-available quantum processors, and using new algorithms developed in the young but rapidly increasing and remarkably promising research field of quantum AI and quantum machine learning,” Caruso added.

Moreover, the quantum embedding algorithm advantages include not only the possibility to simplify a classification problem — as experimentally demonstrated — but also the ability to remarkably speed up any processing of the classical data, such as searching through a database, feature extraction, image segmentation, edge detection, and many others, especially in the case of large volumes of data generated in various domains ranging from sociology to economy, from geography to biomedicine.

Reference: Filippo Caruso, et al., Experimental Quantum Embedding for Machine Learning, Advanced Quantum Technologies (2022). DOI: 10.1002/qute.202100140