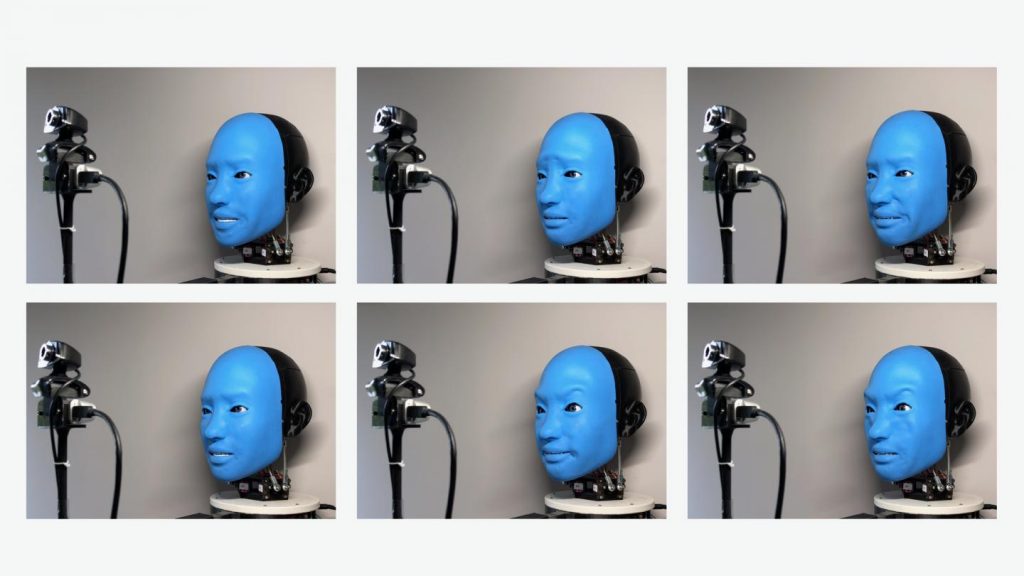

Eva mimics human facial expressions in real-time from a living stream camera. Creative Machines Lab

Nonverbal communication is a significant part of our ability to communicate with one another, often carrying greater weight or impact than words spoken. The human face, in particular, is extremely expressive, capable of conveying countless subtle emotions.

This is an area of interest for researchers in robotics. With growing advancements in the field and as robots become more integrated into our lives, building trust when this essential pillar of communication is absent becomes a challenge. “Robots are intertwined in our lives in a growing number of ways, so building trust between humans and machines is increasingly important,” said Boyuan Chen, a Ph.D. student in the Creative Machines Lab at Columbia Engineering.

“There is a limit to how much we humans can engage emotionally with cloud-based chatbots or disembodied smart-home speakers,” added Hod Lipson, professor of mechanical engineering and director of the Creative Machines Lab. “Our brains seem to respond well to robots that have some kind of recognizable physical presence.”

Long interested in the interactions between robots and humans, researchers in the Creative Machines Lab at Columbia Engineering have been working for five years to create EVA, a new autonomous robot with a soft and expressive face that responds to match the expressions of nearby humans.

“The idea for EVA took shape a few years ago, when my students and I began to notice that the robots in our lab were staring back at us through plastic, googly eyes,” said Lipson.

He also noticed similar trends in grocery stores, where stock robots had been given name badges or, in one case, a hand-knit cap. “People seemed to be humanizing their robotic colleagues by giving them eyes, an identity, or a name,” he said. “This made us wonder, if eyes and clothing work, why not make a robot that has a super-expressive and responsive human face?”

The team built EVA as as a disembodied bust that bears a strong resemblance to the silent but facially animated performers of the Blue Man Group. EVA can express the six basic emotions of anger, disgust, fear, joy, sadness, and surprise, as well as an array of more nuanced emotions, by using artificial “muscles” (i.e. cables and motors) that pull on specific points on EVA’s face, mimicking the movements of the more than 42 tiny muscles attached at various points to the skin and bones of human faces.

While this sounds simple, creating a convincing robotic face has been a formidable challenge for roboticists. For decades, robotic body parts have been made of metal or hard plastic, materials that were too stiff to flow and move the way human tissue does. Robotic hardware has been similarly crude and difficult to work with — circuits, sensors, and motors are heavy, power-intensive, and bulky.

“The greatest challenge in creating EVA was designing a system that was compact enough to fit inside the confines of a human skull while still being functional enough to produce a wide range of facial expressions,” noted Zanwar Faraj, an undergraduate researcher who led a team of students in building the robot’s physical “machinery”.

To overcome this challenge, the team relied heavily on 3D printing to manufacture parts with complex shapes that integrated seamlessly and efficiently with EVA’s skull. After weeks of tugging cables to make EVA smile, frown, or look upset, the team noticed that EVA’s blue, disembodied face could elicit emotional responses from their lab mates. “I was minding my own business one day when EVA suddenly gave me a big, friendly smile,” Lipson recalled. “I knew it was purely mechanical, but I found myself reflexively smiling back.”

Once the team was satisfied with EVA’s “mechanics,” they began to address the project’s second major phase: programming the artificial intelligence that would guide EVA’s facial movements. While lifelike animatronic robots have been in use at theme parks and in movie studios for years, Lipson’s team made two technological advances. EVA uses deep learning artificial intelligence to “read” and then mirror the expressions on nearby human faces. And EVA’s ability to mimic a wide range of different human facial expressions is learned by trial and error from watching videos of itself.

The most difficult human activities to automate involve non-repetitive physical movements that take place in complicated social settings. Chen, who led the software phase of the project, quickly realized that EVA’s facial movements were too complex a process to be governed by pre-defined sets of rules.

To tackle this, Chen and a second team of students created EVA’s brain using several Deep Learning neural networks. The robot’s brain needed to master two capabilities: First, to learn to use its own complex system of mechanical muscles to generate any particular facial expression, and, second, to know which faces to make by “reading” the faces of humans.

To teach EVA what its own face looked like, Chen and team filmed hours of footage of EVA making a series of random faces. Then, like a human watching herself on Zoom, EVA’s internal neural networks learned to pair muscle motion with the video footage of its own face.

Now that EVA had a primitive sense of how its own face worked (known as a “self-image”), it used a second network to match its own self-image with the image of a human face captured on its video camera. After several refinements and iterations, EVA acquired the ability to read human face gestures from a camera, and to respond by mirroring that human’s facial expression.

The researchers note that EVA is a laboratory experiment, and mimicry alone is still a far cry from the complex ways in which humans communicate using facial expressions. But such enabling technologies could someday have beneficial, real-world applications. For example, robots capable of responding to a wide variety of human body language would be useful in workplaces, hospitals, schools, and homes.

Quotes and article adapted from press release provided by Columbia University

The research will be presented at the ICRA conference on May 30, 2021, and the robot blueprints are open-sourced on Hardware-X (April 2021).